), code will probably be different from what I posted.

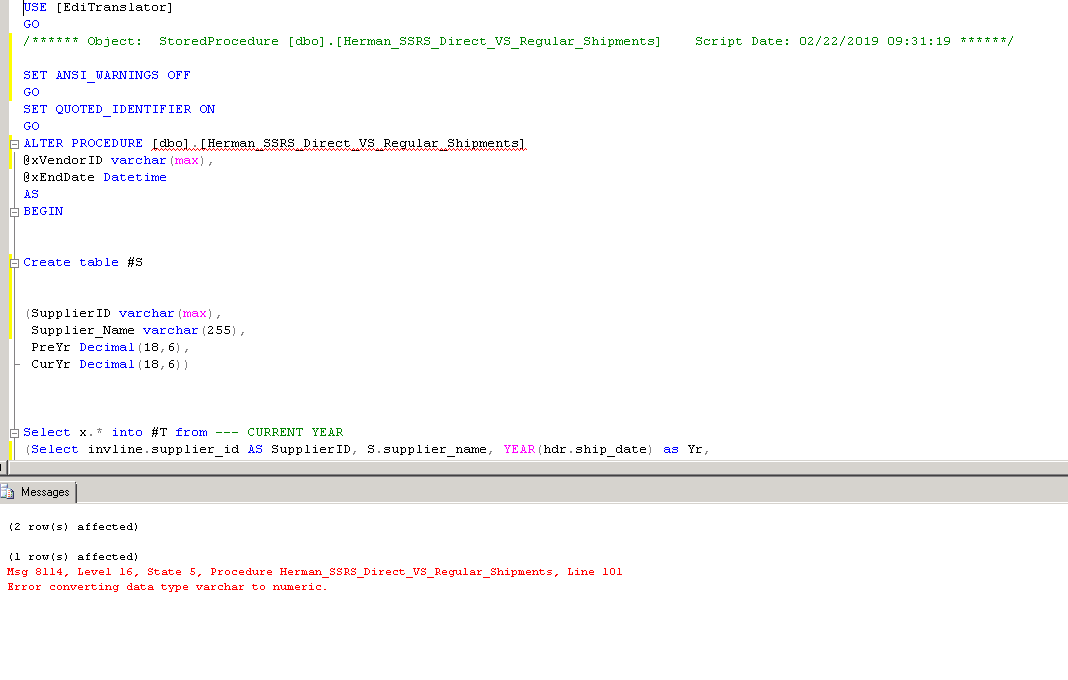

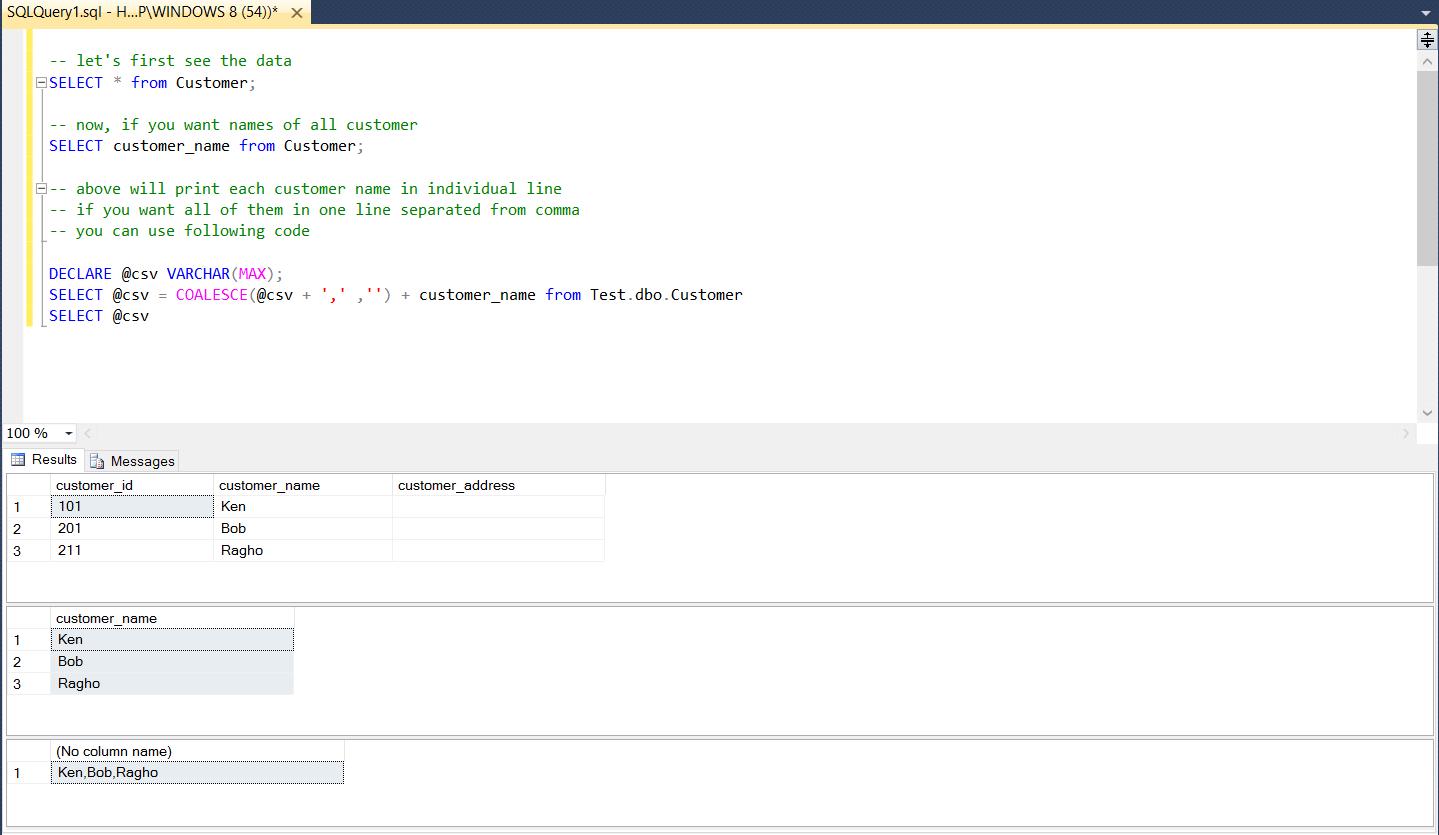

Once again: if you use different database (such as MySQL or PostgreSQL or MS SQL Server or. in English, there is a decimal point (43. On aa.first_date = cast(bb.startdate as date) When you want to convert a string to number, you need to be sure which format does the string use. It means that final query might look like this: select aa.sim Always store dates and timestamps in appropriate datatype columns), you'd apply to_char function: SQL> select to_date('Friday, August 25, 2023',ģ 'nls_date_language = english') date_valueĪs of start_date - which is a timestamp - cast it to date datatype: SQL> select systimestamp, if you see something like "", is it 3rd of June or 6th of March?) you probably don't have to do that): SQL> alter session set nls_date_Format = 'dd.mm.yyyy' Īs of end_date, which is stored as a string (note that this was bad decision. (I'm setting date format so that you'd know what is what e.g. Let's presume that app_date1 table's first_date and end_date columns' datatype is date (as you didn't say different, and their name suggests so). Therefore, convert everything else to valid date values. The best option is to work with dates, not strings. Functions differ.įor example, in Oracle, this is what you might do. It is - as you said - a language, but each database has its own flavor, especially when dealing with date values. you can use a similar approach to convert to Float types."Using SQL language" isn't quite enough.

In this simple PySpark article, I have provided different ways to convert the DataFrame column from String Type to Double Type. Below DOUBLE(column name) is used to convert to Double Type.ĭf.createOrReplaceTempView("CastExample")ĭf4=spark.sql("SELECT firstname,age,isGraduated,DOUBLE(salary) as salary from CastExample") (DTWSTR,3)'Cat' This example casts an integer to a decimal with a scale of two. (DTSTR,1,1252)5 This example casts a three-character string to double-byte characters. In SQL expression, provides data type functions for casting and we can’t use cast() function. This example casts an integer to a character string using the 1252 code page. Using PySpark SQL – Cast String to Double Type Using selectExpr() – Convert Column to Double Typeįollowing example uses selectExpr() transformation of SataFrame on order to change the data type.ĭf3 = df.selectExpr("firstname","age","isGraduated","cast(salary as double) salary")Ĥ. |firstname|age|isGraduated|gender|salary |ģ. In case if you wanted round the decimal value, use the round() function.įrom import col, round withColumn() – Convert String to Double Typeįirst will use PySpark DataFrame withColumn() to convert the salary column from String Type to Double Type, this withColumn() transformation takes the column name you wanted to convert as a first argument and for the second argument you need to apply the casting method cast().ĭf2 = df.withColumn("salary",df.salary.cast('double'))ĭf2 = df.withColumn("salary",df.salary.cast(DoubleType())) Note that column salary is a string type.Ģ.

Spark.sql("SELECT firstname,DOUBLE(salary) as salary from CastExample") Convert String Type to Double Type Examplesįollowing are some PySpark examples that convert String Type to Double Type, In case if you wanted to convert to Float Type just replace the Double with Float.ĭf.withColumn("salary",df.salary.cast('double'))ĭf.withColumn("salary",df.salary.cast(DoubleType()))ĭf.withColumn("salary",col("salary").cast('double'))ĭf.withColumn("salary",round(df.salary.cast(DoubleType()),2))ĭf.select("firstname",col("salary").cast('double').alias("salary"))ĭf.selectExpr("firstname","cast(salary as double) salary") PySpark Tutorial For Beginners (Spark with Python) 1.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed